How to download 404 errors from Google Search Console with Linking pages

Update: I feel really sad to inform that google has discontinued the Google Search Console API Explorer. Due to this, this method doesn’t work anymore.

The Google Search Console is of great help to Webmasters with several useful features like sitemap submission, search analytics, links to your site, crawl errors report. With the GSC, the SEO specialists have a powerful tool at their fingertips to direct the course of their SEO requests.

However, the Google Search Console also has several aspects that are somewhat frustrating. For eg; the “Links to your site” feature only shows a maximum of 1000 domains. Now, Imagine how many domains link to a site like Moz or Buzzfeed! Do you think the Google Search Console serves them well?

The sitemap tool shows how many URLs were indexed but does not distinguish between the non-indexed and indexed URLs. And well there are other similar annoyances. (Update: Google is testing a beta feature called Index Coverage report that will show indexed pages count and reasons why some pages could not be indexed. Read more on Google Webmaster Blog.)

Another point that annoys Google Search Console (GSC) users is the Crawl Errors report. The Search Console shows us the crawl errors, the HTTP status code of the error (404, 503 etc), and also the source of the error. The bothersome part is you have to click on each URL to view where the error originates from.

Another problem is the 1,000 errors limit. You’ll have to fix the existing ones to view newer ones.

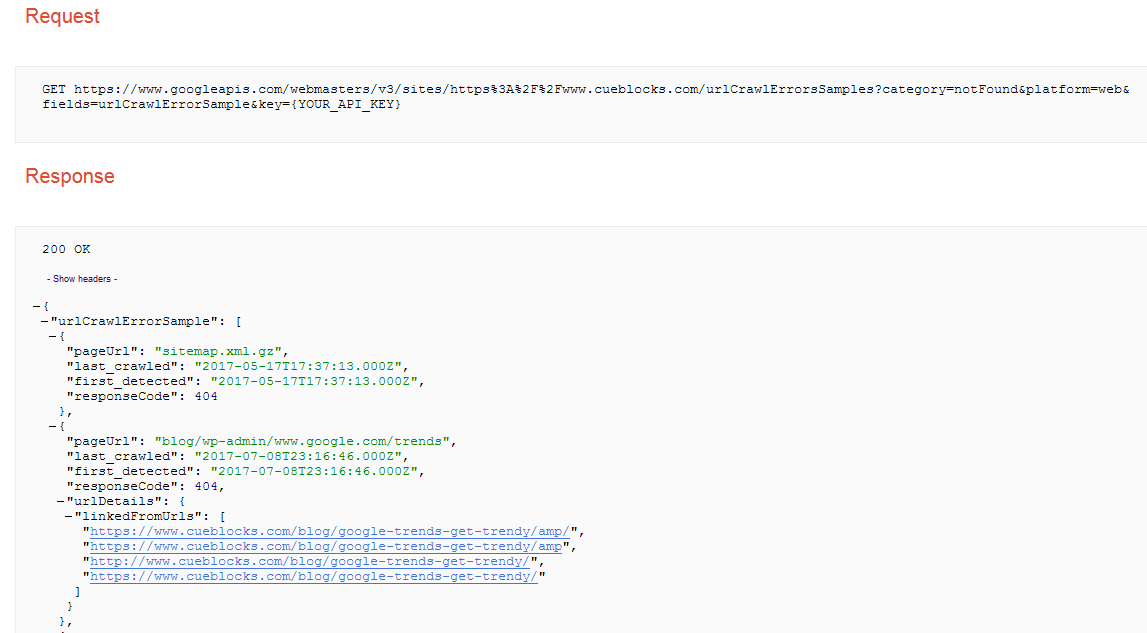

Solution: Download crawl errors with source using Google API Explorer

The Google API explorer is a savvy tool to communicate with numerous Google APIs. But we need to concern ourselves only with the Search Console API.

You’ll need Full Access to use the API and Search Console account, so make sure you have them beforehand.

Once logged in, you’ll notice the Search Console API offers several Services, 13 services to be exact. But our focus here is the one labeled webmasters.urlcrawlerrorssamples.list. So, go ahead and click on it.

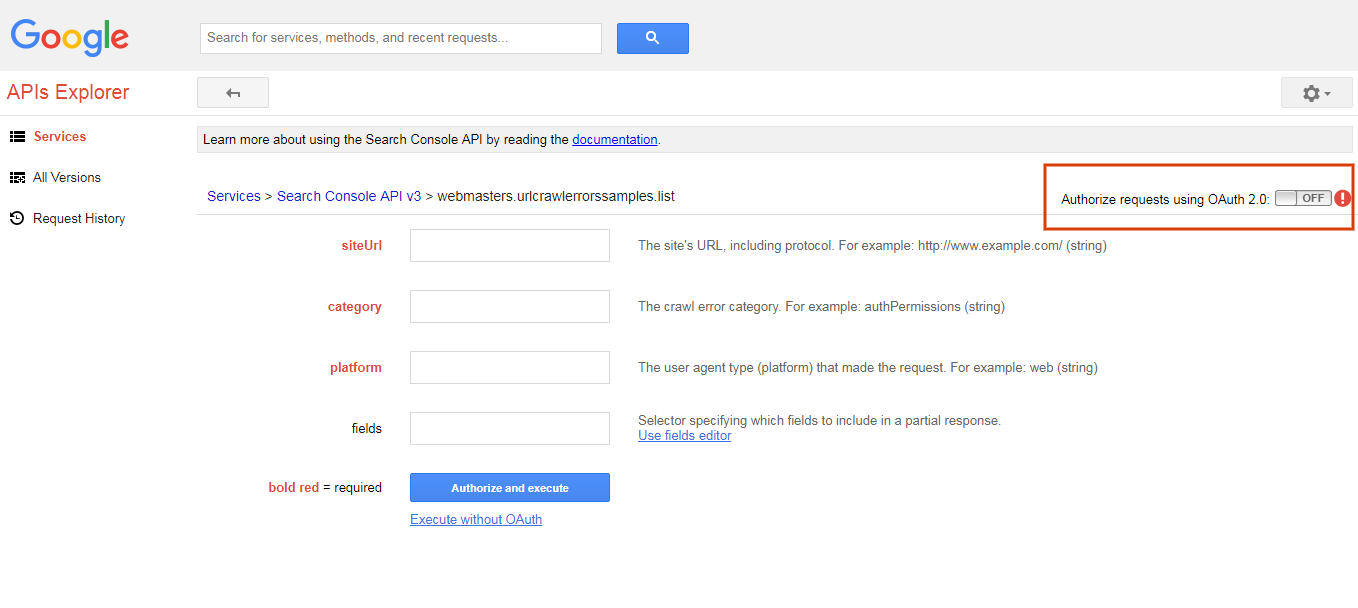

The next screen will look like the image below. Beyond this point it’s a simple 3 step process:

1. Fill in the parameters and Execute the query

The fields you notice on the next screen read as follows:

- Site URL – Quite self-explanatory

- Category – This field is for denoting the type of errors you’d like to filter it with. Luckily for us, it offers an easy to use drop down with possible fields. For 404 errors, choose notFound as the option.

- Platform – This refers to the user agent or simply the type of device you wish to retrieve errors for. Again an easy drop down to help us out. We’ll be selecting web for now.

- Fields – This specifies the data you want to retrieve along with crawl errors like source, error detected on date, last crawled date, error code etc.

Use the fields editor to select required fields. The available ones are:

- urlCrawlErrorSample – Provides information about the sample URL and its crawl error

- first_detected – Know the date when the error was first detected

- last_crawled – Is the date when the URL was first crawled

- pageUrl – The URL of crawl error

- responseCode – duh

- urlDetails– To retrieve more details about error URL. Selecting it gives you two options:

- containingSitemaps – Sitemap URLs pointing to the crawl error

- linkedFromUrls – Source of the crawl error. The root of all the fuss!

I prefer selecting all the data and filtering it down later. You are free to choose your path.

Oh! Do turn on the Authorize requests using OAuth 2.0 button on top right (marked with an orange rectangle in the image)

or

Once all the fields are set, just click the Authorize and execute button.

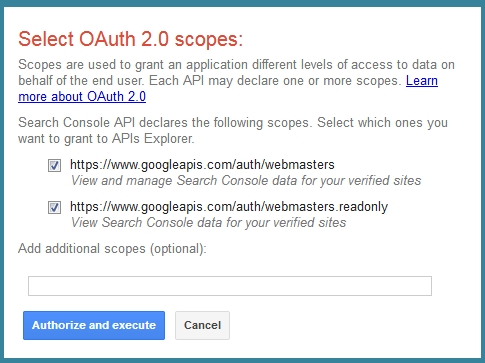

You’d be greeted with a popup before the report runs, like in the image below:

Check both options and hit the Authorize and execute button.

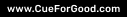

2. Convert the output JSON to a CSV

If everything goes well, you should see a status 200 OK message with a JSON output similar to the image below

Copy the output after the first curly bracket { till the last } curly bracket in the end.

Now, head over to a JSON to CSV converter. I prefer https://konklone.io/json/ for the simple interface, a preview window, and the ability to download the CSV.

Note: I tried another converter too but the report wasn’t 100% accurate. Test your converter before you finalize on a report.

Paste the JSON output and convert it into a CSV.

3. Download the CSV and Fix 404 errors

This is pretty much self-explanatory! Download your CSV and start fixing those 404 errors.

Word to the wise: Google Search Console may not list sources or linking domains for all errors. So don’t worry if some rows returned are empty.

Conclusion:

404 errors may not seem critical but fixing them helps saves crawl budget, improves user experience, and also helps retain link value.

You may use the same process to find and fix other errors. The API includes 9 types of errors in the Google Search Console that you can fix. You don’t have to wreck your brain to search them out; we have listed all error types below.

- authPermissions

- flashContent

- manyToOneRedirect

- notFollowed

- notFound

- other

- roboted

- serverError

- Soft404

I hope you find this useful! Now head to your laptop and start fixing those 404 errors.

- About the Author

- Latest Posts

I am part of the CueBlocks Organic Search(SEO) team. I love playing football and reading about Technical SEO.

10 Replies to “How to download 404 errors from Google Search Console with Linking pages”

Add a comment

-

Shopify Magic: AI Update Introducing Powerful New Features for E-commerce Stores

by Nida DanishShopify’s latest update is called Shopify Magic, introducing interesting new AI powered features set to redefine how entrepreneurs manage and …

Continue reading “Shopify Magic: AI Update Introducing Powerful New Features for E-commerce Stores”

-

Is “Recyclable” the Correct Way to Describe Your Packaging?

by Nida DanishAs demand grows for green products, people are paying closer attention to how brands describe their efforts. Take “recyclable,” for …

Continue reading “Is “Recyclable” the Correct Way to Describe Your Packaging?”

-

How to Steer Clear of Greenwashing in Your Marketing Campaigns

by Nida DanishOn March 12, 2024, the European Parliament passed the Green Claims Directive to ensure companies back up their environmental claims …

Continue reading “How to Steer Clear of Greenwashing in Your Marketing Campaigns”

-

High Converting Product Descriptions for Sustainable Products

by Nida DanishThe Growing Demand for Sustainable Products It’s fascinating how consumers are increasingly leaning towards environmentally friendly products. In fact, recent …

Continue reading “High Converting Product Descriptions for Sustainable Products”

-

Evaluating the Carbon Emissions of Shopify Themes

by Harleen Sandhu

Committing to green claims as a business is a huge promise to deliver on. For ecommerce stores, Shopify is leading …

Continue reading “Evaluating the Carbon Emissions of Shopify Themes”

-

Dark Mode: Accessibility vs Sustainable Web Design

by BalbirIntroduction Dark mode, a feature that lets users switch the color scheme of an app or website to darker colors, …

Continue reading “Dark Mode: Accessibility vs Sustainable Web Design”

Unfortunately the API method does not work any more as the API call has been depreceated 🙁

Thanks for this post, works excellent! Helped a lot and prevented me from writing my own script ;).

Thanks very much for this excellent post Rajiv! Just tried it and its very handy! I was just wondering when you think you’ll find a method to extract more than 1,000 URL’s as that would be ideal for larger websites! Thanks again and all the best! Alex

Thank you, Alex!

I am currently working on it. It appears a lot of us run into 404 errors in bulk. Will be posting the solution for 1000 plus URLs soon, hopefully.

Cheers!

Rajiv

Hi Rajiv,

Great walk-through of the API.

Using the Search Console interface I was able to download 1,325/5,929 errors while the API only returned 1,000. Any idea what I’ve missed?

Thanks again – JR

Hi John,

Thank you! Unfortunately, the API explorer seems to be limited to 1000 results only. I am working on a way around this. I’ll make it another blog post once it is complete.

Rajiv

Thanks Rajiv.

This worked a treat, first time.

Glad to know you found it useful, Ewan

Hi,

Its working!

Thanks for showing the easy way to download it.

Great stuff.

Regards,

Malisa

Thank you, Malisa!